The launch comes as privacy concerns around AI assistants continue growing among both consumers and businesses. Cloud AI tools offer access to larger and more capable models, but they also require sending data to external providers. Local AI models offer stronger privacy guarantees but still struggle with hardware requirements and performance limitations on many systems.

Osaurus is designed around the idea that users may want access to both environments depending on the task. Users can choose smaller local models for privacy-sensitive work while still connecting to cloud systems for more demanding operations that require greater compute resources.

The project evolved out of an earlier AI desktop assistant called Dinoki, which Osaurus co-founder Terence Pae described as an “AI-powered Clippy.” According to Pae, customer concerns about ongoing token costs pushed him to rethink how AI assistants could operate locally instead of relying entirely on cloud infrastructure.

“That’s how Osaurus started,” Pae, a former Tesla and Netflix software engineer, told TechCrunch. “You can do pretty much everything on your Mac locally, like browsing your files, accessing your browser, accessing your system configurations. I figured this would be a great way to position Osaurus as a personal AI for individuals.”

Osaurus supports a wide range of local and cloud-connected models, including MiniMax M2.5, Gemma 4, Qwen3.6, GPT-OSS, Llama, DeepSeek V4, Apple’s on-device foundation models, Liquid AI’s LFM family, and cloud providers including OpenAI, Anthropic, Gemini, xAI/Grok, Venice AI, OpenRouter, Ollama, and LM Studio.

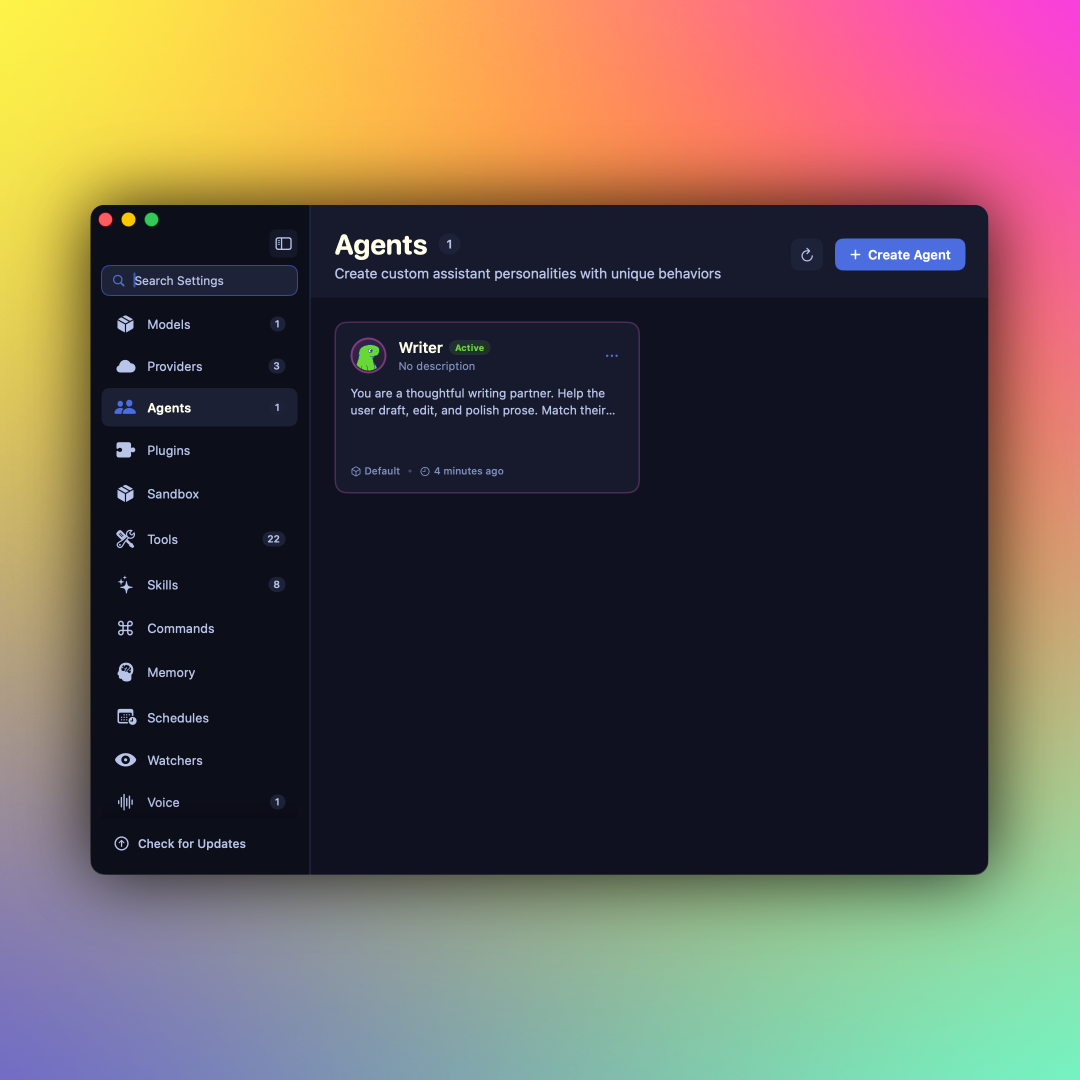

The software also includes more than 20 built-in plugins covering Mail, Calendar, Git, Filesystem, Browser, Vision, Search, XLSX, PPTX, Music, and other macOS integrations. More recently, the company added voice support as well.

Unlike more developer-oriented local AI tools such as OpenClaw or Hermes, Osaurus is targeting mainstream Mac users with a more accessible interface. The company also says the system addresses some security concerns associated with local AI tooling by running inside a hardware-isolated virtual sandbox that limits what the AI can access.

The app still requires substantial hardware resources for running larger local models. According to Pae, users will need at least 64 GB of RAM for local AI workloads, while larger models like DeepSeek V4 may require systems with roughly 128 GB of RAM.

Still, Pae believes local AI capabilities are improving rapidly as model efficiency advances. “I can see the potential of it, because the intelligence per wattage — which is like the metric for local AI — has been going up significantly,” he said. “Last year, local AI could barely finish sentences, but today it can actually run tools, write code, access your browser, and order stuff from Amazon it’s just getting better and better.”

Since launching publicly nearly a year ago, Osaurus says the project has been downloaded more than 112,000 times. The company is currently participating in the New York-based startup accelerator Alliance and is exploring future enterprise use cases in sectors like healthcare and legal services where local AI deployments could help address privacy and compliance concerns.

Pae also argued that growing local AI adoption could eventually reduce dependence on large-scale AI data centers. “Instead of relying on the cloud, they can actually deploy a Mac Studio on-prem, and it should use substantially less power,” he said. “You still have the capabilities of the cloud, but you will not be dependent on a data center to be able to run that AI.”

This analysis is based on reporting from techbuzz and Techcrunch.

Images courtesy of Osaurus, Inc..

This article was generated with AI assistance and reviewed for accuracy and quality.