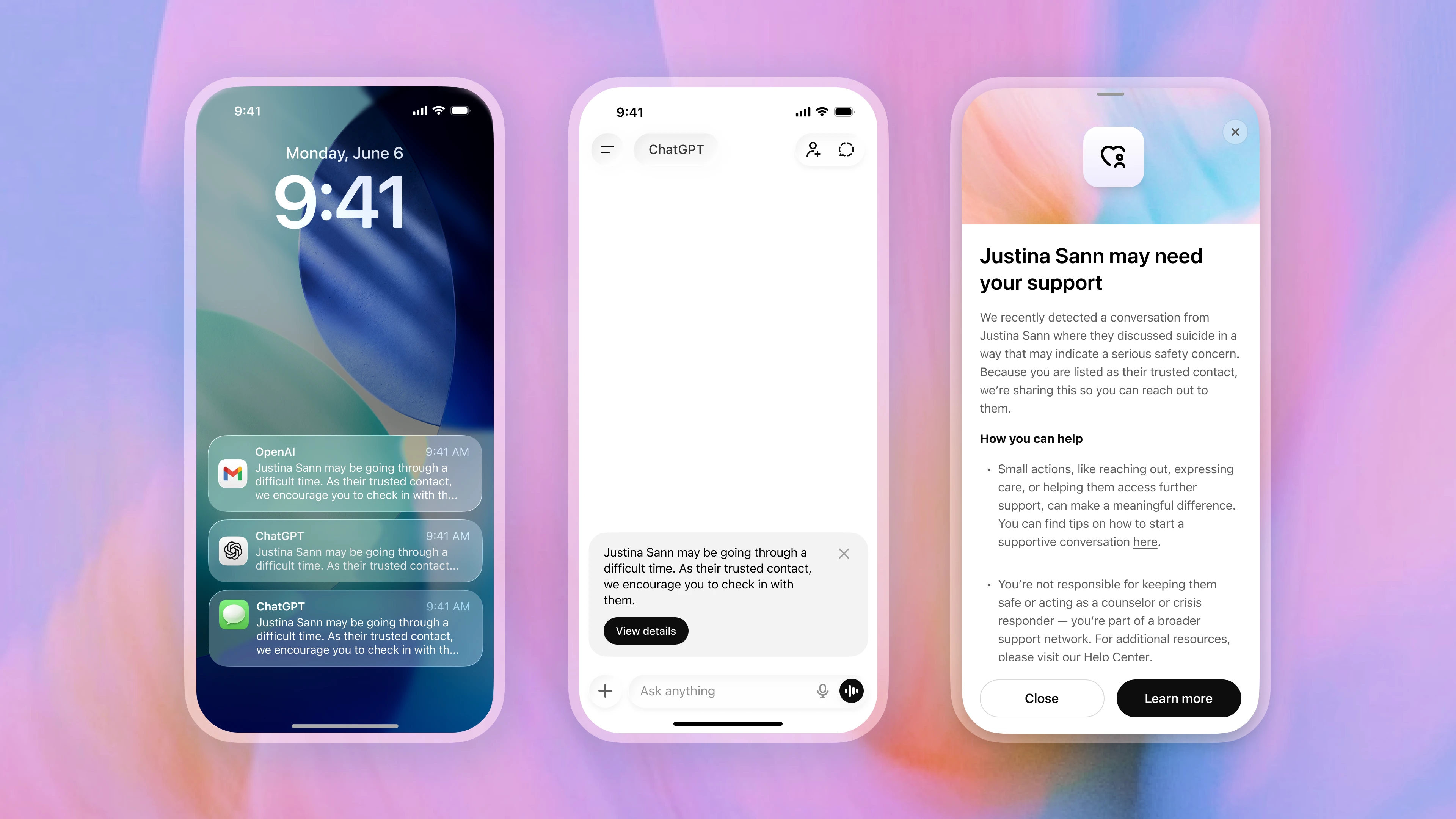

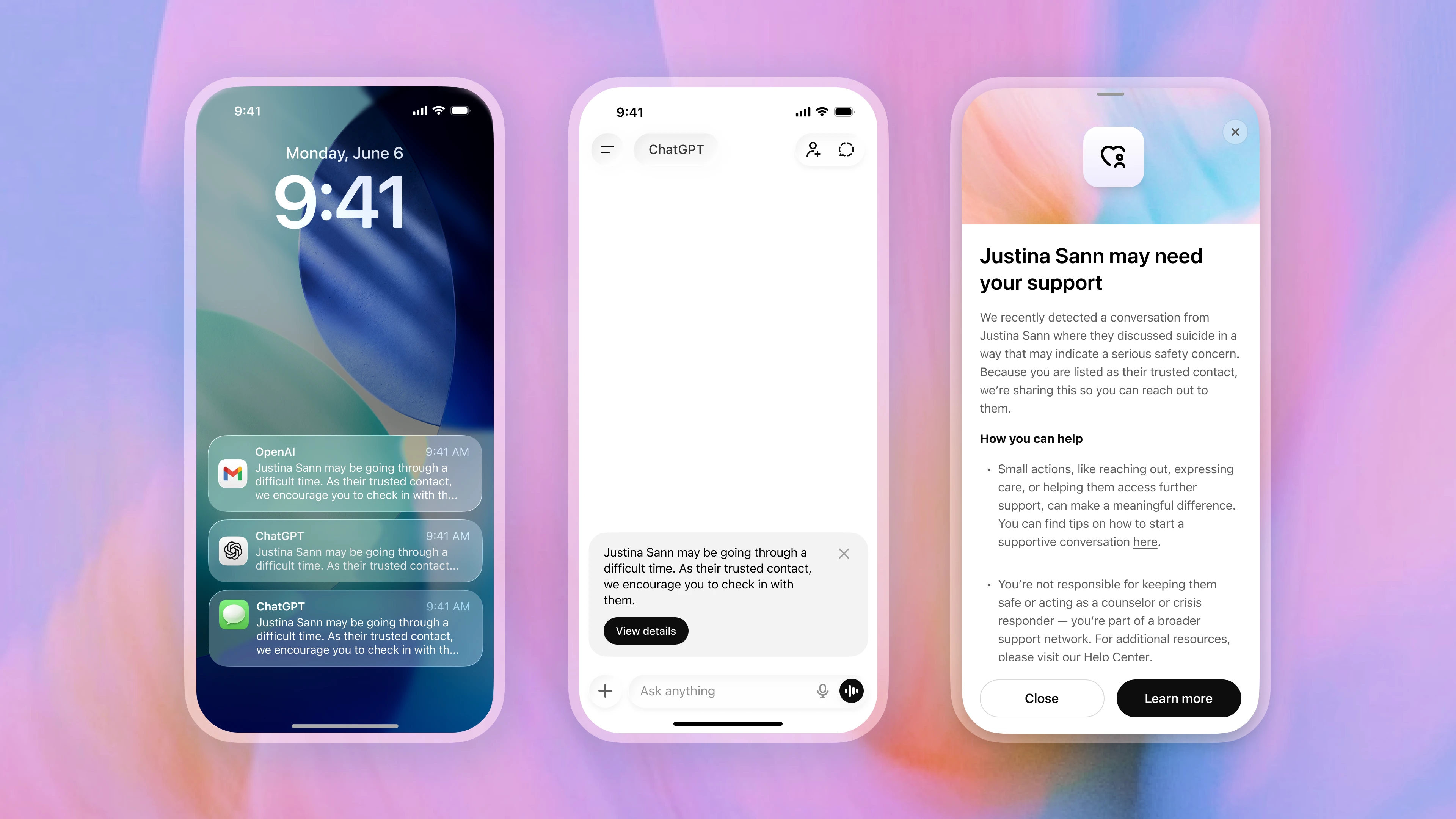

OpenAI said the system combines automated detection with human oversight. Conversations flagged by internal systems are reviewed by a specialized safety team before any notification is sent. If reviewers determine the situation may involve a serious safety concern, the trusted contact can receive a brief alert by email, text message, or in-app notification encouraging them to check in with the user.

The company emphasized that alerts do not include chat transcripts or detailed conversation content. Instead, notifications provide only limited context indicating that self-harm arose in a potentially concerning way. OpenAI said the design is intended to balance intervention with user privacy.

The feature arrives as AI companies face increasing pressure to address the risks associated with emotionally sensitive chatbot interactions. OpenAI has been hit with lawsuits from families alleging ChatGPT contributed to or encouraged suicidal behavior. The company did not directly reference those cases in its announcement, but positioned Trusted Contact as part of a broader effort to improve how AI systems respond during moments of distress.

“Trusted Contact is part of OpenAI’s broader effort to build AI systems that help people during difficult moments,” the company said. “We will continue to work with clinicians, researchers, and policymakers to improve how AI systems respond when people may be experiencing distress.”

OpenAI said Trusted Contact was developed with input from clinicians, researchers, suicide prevention organizations, and its Expert Council on Well-Being and AI. The company also cited guidance from its Global Physicians Network, which includes more than 260 licensed physicians across 60 countries.

“Psychological science consistently shows that social connection is a powerful protective factor, especially during periods of emotional distress,” said Dr. Arthur Evans, chief executive officer of the American Psychological Association. “Helping people identify a trusted person in advance, while preserving their choice and autonomy, can make it easier to reach out to real-world support when it matters most.”

The feature also builds on safeguards OpenAI introduced for teen accounts last year, which allowed parents or guardians to receive safety notifications tied to serious-risk conversations. Trusted Contact extends a similar framework to adult users, though participation remains voluntary and users can remove or change their designated contact at any time.

OpenAI said ChatGPT will continue refusing requests related to suicide instructions or self-harm methods while directing users toward crisis hotlines, emergency services, and mental health professionals when appropriate. The company also said its systems may suggest users take breaks from extended chatbot use in some circumstances.

While OpenAI described serious safety situations as rare, the company said it aims to review these notifications in under one hour before any alert is sent.

This analysis is based on reporting from OpenAI.

Images courtesy of OpenAI.

This article was generated with AI assistance and reviewed for accuracy and quality.