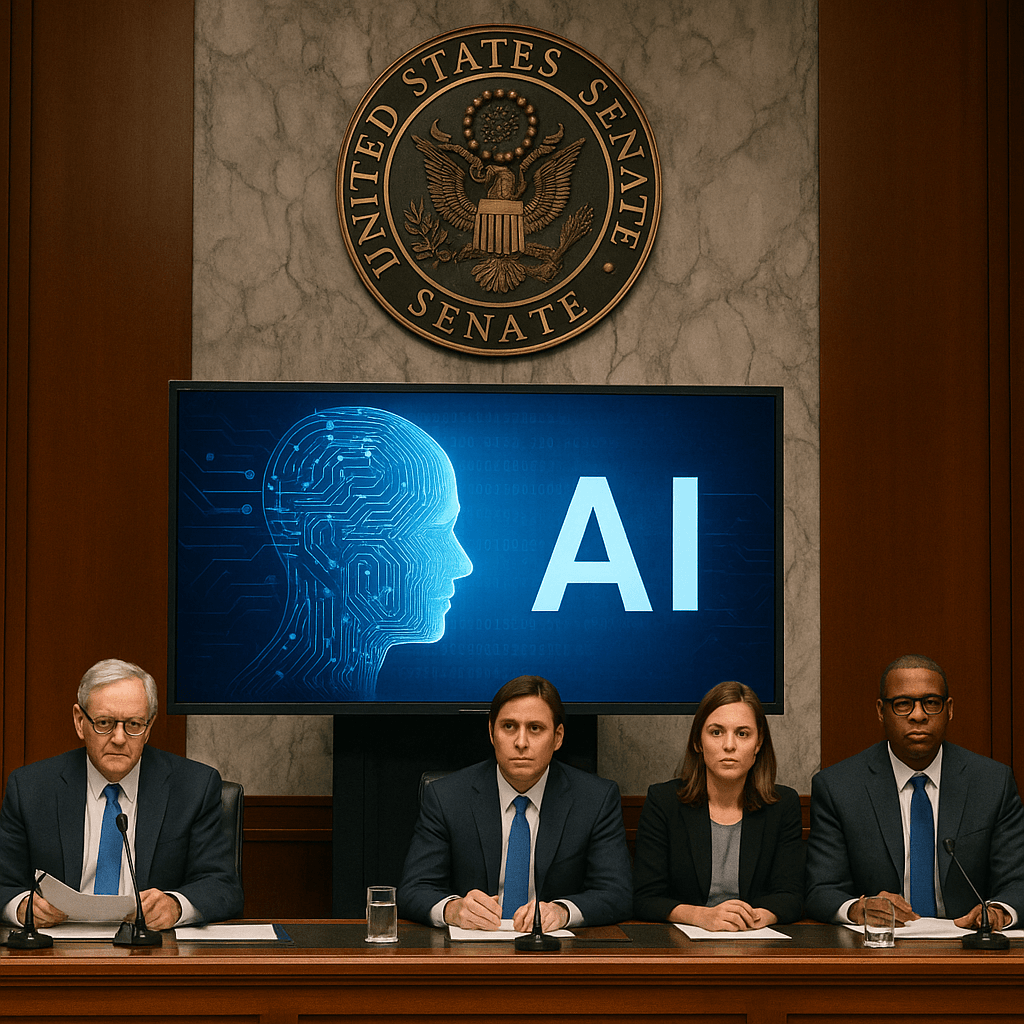

Imagine scrolling through your feed and suddenly stumbling upon a photo of someone you know—except it isn’t really them. It’s a deepfake, disturbingly real, and completely fabricated by AI. For years, technology raced ahead of the rules that should govern it. But now, in 2025, lawmakers are finally catching up. The U.S. government is in the thick of it, wrestling with what it means to legislate in a world where machines can mimic human behavior with eerie accuracy and devastating consequences.

One of the most emotionally charged victories in this battle is the TAKE IT DOWN Act. Born from bipartisan outrage and propelled by survivors’ stories, this legislation targets one of the darker sides of AI: non-consensual intimate imagery. These AI-generated deepfakes often find their way online, causing irreparable harm. With this new law, platforms are required to remove such content within 48 hours of being notified. It’s not just a policy—it’s a promise to victims that they’re not alone, and that the law stands on their side. The near-unanimous vote in the House—409 to 2—shows just how urgently Congress sees this issue.